Trends in modern data stack - part 1

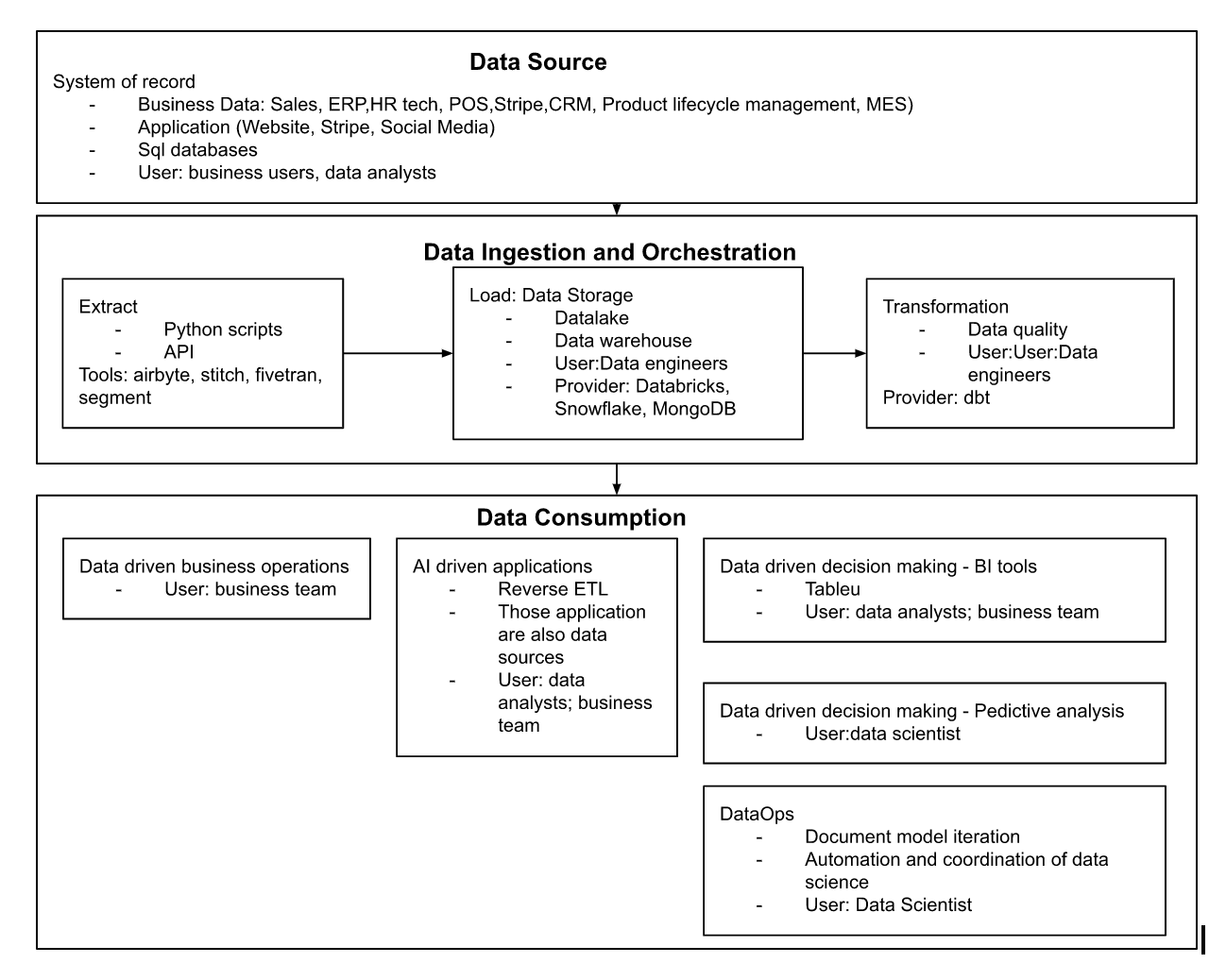

Modern data stack has been a hot topic in the last three years since the surge of scalable cloud base warehouses(Snowflake). As it continues to evolve, I want to share my thoughts on how data flow within an organization and a few thoughts on the trends within modern data stack. Granted, each organization is very different. I tried to come up with a general flow as I combined my online research and past enterprise tech experience.

Trend 1: Data Mesh and Data Fabric in distributed infrastructure environments

What is Data Mesh?

Data mesh is how you design data architecture and governance rather than tools. In a simple explanation, data mesh means data decentralization. Each enterprise business function (sales, supply chain, finance, marketing, etc.) owns a data pipeline, sets its own rule on data quality and governance, and decides how they want to use data to make data-driven decisions.

Juan Sequada gives maybe one of the best definitions of Data Mesh ” It is a paradigm shift towards a distributed architecture that attempts to find an ideal balance between centralization and decentralization of metadata and data management.”

I highly recommended reading Towards a data mesh - part 1; Towards a data mesh part 2 by François Nguyen. In this series, he explained the ideal stage of data mesh - to have each team be able to work on their subjects but still be able to collaborate and share with each other. He put three streams together to reach the goal: data domains in each function building data-as-a-product, domain agnostic shared platform team to provide self-serve data infrastructure, enabling team managing Data Governance.

At the end of the day, data mesh allows each function to own the data domain and make data-driven business decisions with reliable and actionable data, and with the support of a shared platform team, centralized DataGovOps, and IT support.

What is Data Fabric?

Data fabric, similar to data mesh, is not technology by itself but a concept, as the data sources are diverse and sparse. Data fabric is a centric approach to put an orchestration policy on top of all data sources, cloud and on-premise. The goal is to integrate data and prevent silos across the organizations.

According to IBM, data fabric is an architecture that facilitates end-to-end integration of various data pipelines and cloud environments, allowing for a cohesive and holistic view of data across different functions and enabling the frictionless access and sharing of data in a distributed network environment.

Data fabric covers a large spectrum of the overall data flow I presented above, including data management, data ingestion, data orchestration, data discovery, and data access.

My thoughts on the trend:

Data mesh and data fabric touch many aspects in the diagram(above) of how data flows in organizations. They are design approaches and architecture concepts rather than technology. Both enable the self-serve access to and sharing data in distributed environments beyond technical teams such as data engineers, developers, and analysts.

Data fabric focuses on integrating data, unifying data types and endpoints across business units. Data mesh, on the other hand, enables a decentralized data domain with data ownership to allow for quick data-driven decisions in a fast-paced business environment while dependent upon and supported by the technology capabilities associated with data fabric.

Data mesh and fabric concepts help set the foundation of human organization structure, data culture, and who owns what. Understanding them also allows us to see how other trends are associated and fit in the big picture of the data diagram(source→ingestion→ orchestration→consumption).

Within data mesh, as an example, the data domain is associated with the data-as-a-product trends(Trend 5). Domain-agnostic platform teams are more associated with ETL and reverse ETL(Trend 2). Data observability and metric standardization (Trend 2). Enabling team, which is responsible for DataGovOps, is tied to data catalog 3.0 (Trend 4).

I think the trends will continue in the next year. Opportunities within the data fabric might emerge in data discovery and data access pillars, while disruption is already happening in data ingestion and orchestration. On the other hand, data mesh will take years to mature as the paradigm shifts. It’s not just the design of architecture and process, but more about culture change, organization shift, the embracement of data-driven decision-making within the enterprise leadership, and the endorsement of data as the future fuel of business.

From a startup perspective, the designing philosophy with data mesh and data fabric will ultimately determine the sponsors, decision-makers, and stakeholders for any data-related product sales in many enterprises.

Trend 2: ETL to ELT, now reverse ETL

ETL(Extract, Transformation, Load) happens in the data ingestion and orchestration layer. In the past, ETL has been the only way. The extracted data only moves from the processing server to the data warehouse once it has been successfully transformed. It worked well for structured data but sacrificed the potential for many unstructured and raw data.

Unlike ETL, extract, load, and transform (ELT) does not require data transformations before the loading process. Data cleansing, enrichment, and transformation occur within the data warehouse. Raw data is stored indefinitely in the data warehouse, allowing for multiple transformations and many use cases that weren’t possible before. ELT is only made possible with the invention of the scalable cloud-based data warehouse. Changing orders of transformation and loading also opens up new opportunities, such as real-time data analytics.

As enterprises undergo waves of digital transformation, executives and leadership teams are aware of the power of data-driven decision-making. But it’s hard to make data-driven decisions if the data is not actionable. The lack of consistent and good-quality data flowing across the applications was a big problem. Luckily, with ELT becoming the new standard, the data warehouse becomes the single source of truth for data, including data that can be spread across different systems. Reverse ETL is the process in which data is moved from the data warehouse to the downstream applications. This new approach operationalized much data that wasn’t available before. Enterprises now can better leverage up-to-date data in the applications to make decisions and achieve business goals with the same tool. This wasn’t possible with traditional scattered and un-synced data.

My thoughts on the trend:

It is fun to watch how the space of data ingestion, orchestration, and synchronization is shaping. As an investor, I think there will be a lot of consolidation in the reverse ETL tools in the next few years. The tools themselves aren’t as groundbreaking as DBT for T, and the use cases of the tools are straightforward. I could see data warehouse and data lake acquiring the reverse ETL tools to increase their offering and capabilities.

On the other hand, I still think there are opportunities for transformation and data quality. Data, as the fuel for machine learning applications, need a lot of quality control, cleansing, and transformation. Data engineers spend hours and hours detecting an anomaly and getting data ready for AI/ML applications. In that space, I'm seeing startups building an open-source community around dbt(re-data), startups focusing on using ML/AI to ensure data quality(Stemma), and startups using no-code tools allowing business users to transform the data in the way they prefer. (Estuary) Overall, I am very excited to see how this space will continue to evolve, catering to the need of businesses and users(data engineers) in the next few years.